What Game Theory Reveals About Life, The Universe, and Everything

This is about the most famous problem in game theory. Problems of this sort pop up everywhere, from nations locked in conflict to roommates doing the dishes. Even game shows have been based around this concept. Figuring out the best strategy can mean the difference between life and death, war and peace, flourishing and the destruction of the planet. And in the mechanics of this game, we may find the very source of one of the most unexpected phenomena in nature: cooperation.

On the 3rd of September, 1949, an American weather monitoring plane collected air samples over Japan. In those samples, they found traces of radioactive material. The Navy quickly collected and tested rainwater samples from their ships and bases all over the world. They also detected small amounts of Cerium-141 and Yttrium-91. But these isotopes have half-lives of one or two months, so they must have been produced recently and the only place they could have come from was a nuclear explosion.

But the US hadn't performed any tests that year, so the only possible conclusion was that the Soviet Union had figured out how to make a nuclear bomb. This was the news the Americans had been dreading. Their military supremacy achieved through the Manhattan Project was quickly fading. This makes the problem of Western Europe and the United States far more serious than it was before and perhaps makes the imminence of war greater. Some thought their best course of action was to launch an unprovoked nuclear strike against the Soviets while they were still ahead.

In the words of Navy Secretary Matthews to become "aggressors for peace". John von Neuman, the founder of game theory, said, "If you say why not bomb them tomorrow, I say, why not bomb them today? If you say today at five o'clock, I say why not at one o'clock?" Something needed to be done about nuclear weapons and fast. But what? In 1950, the RAND Corporation, a US-based think tank was studying this question. And as part of this research, they turned to game theory.

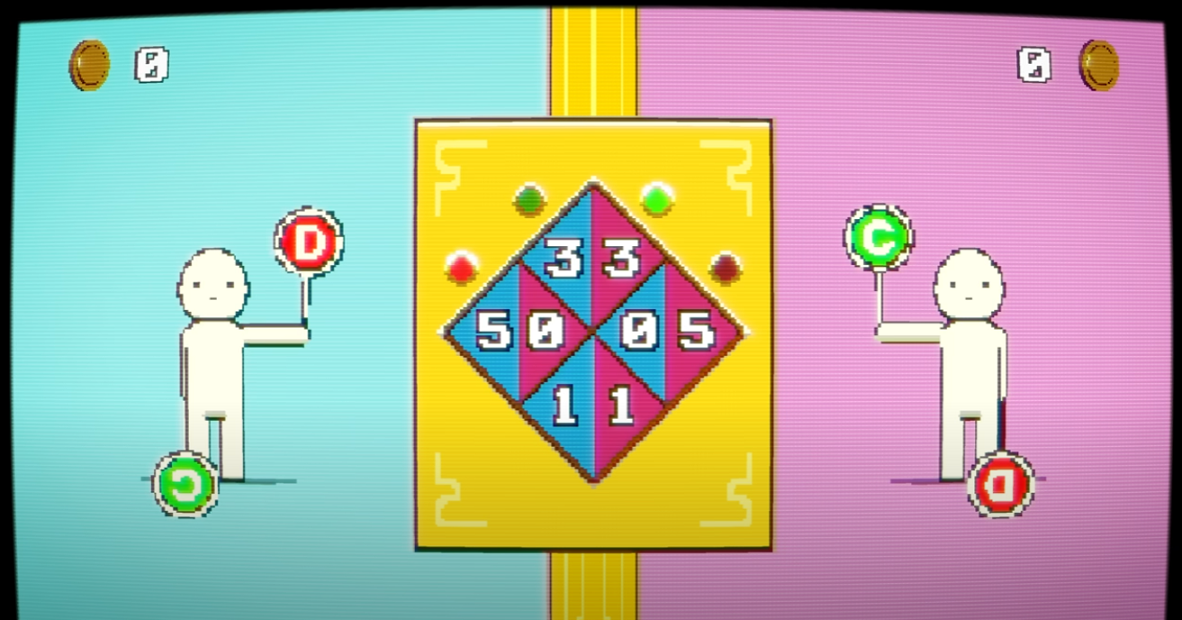

That same year, two mathematicians at RAND had invented a new game, one which unbeknownst to them at the time, closely resembled the US-Soviet conflict. This game is now known as the prisoner's dilemma. So let's play a game. A banker with a chest full of gold coins invites you and another player to play against each other. You each get two choices. You can cooperate or you can defect. If you both cooperate, you each get three coins. If one of you cooperates, but the other defects, then the one who defected gets five coins and the other gets nothing.

And if you both defect, then you each get a coin. The goal of the game is simple: to get as many coins as you can. So what would you do? Suppose your opponent cooperates, then you could also cooperate and get three coins or you could defect and get five coins, instead. So you are better off defecting, but what if your opponent defects, instead? Well, you could cooperate and get no coins or you could defect and at least get one coin.

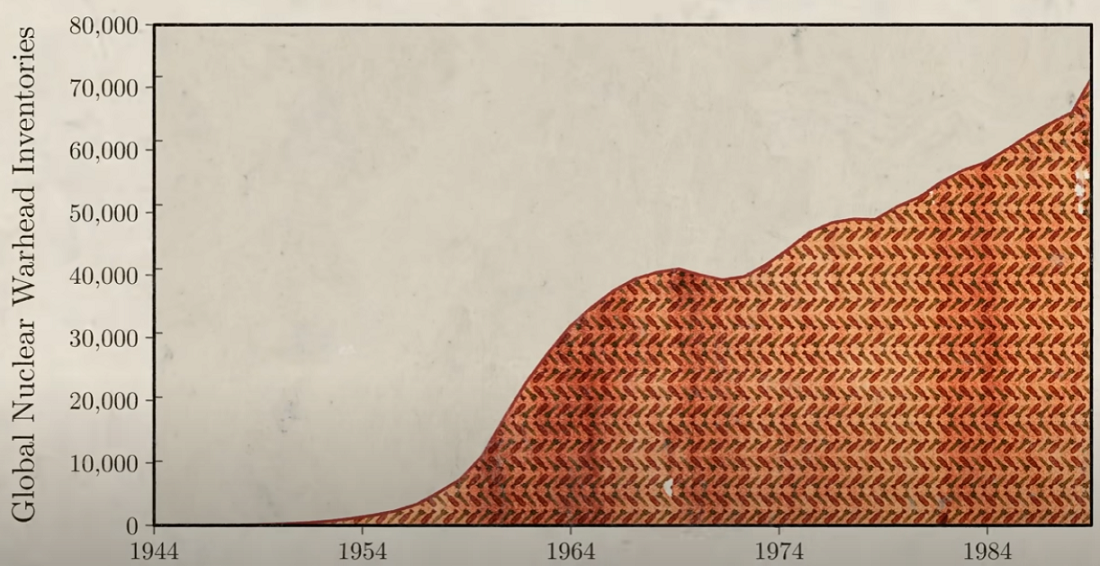

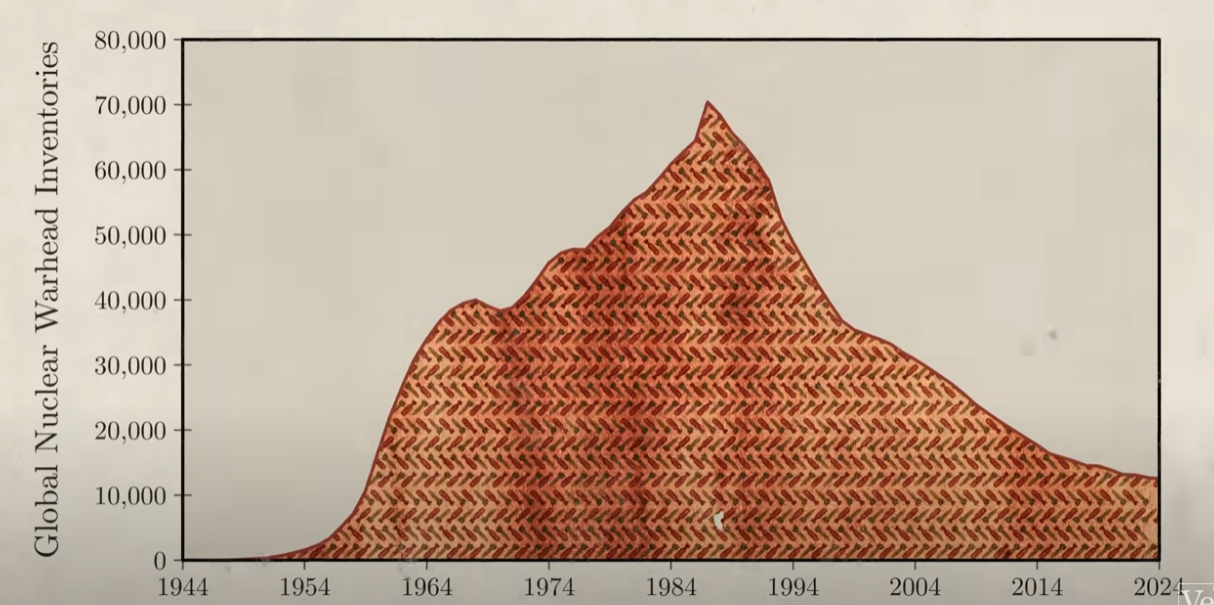

So no matter what your opponent does, your best option is always to defect. Now, if your opponent is also rational, they will reach the same conclusion and therefore also defect. As a result, when you both act rationally, you both end up in the suboptimal situation getting one coin each when you could have gotten three, instead. In the case of the US and Soviet Union, this led both countries to develop huge nuclear arsenals of tens of thousands of nuclear weapons each, more than enough to destroy each other many times over.

But since both countries had nukes, neither could use them. And both countries spent around $10 trillion developing these weapons. Both would've been better off if they had cooperated and agreed not to develop this technology further. But since they both acted in their own best interest, they ended up in a situation where everyone was worse off. The prisoner's dilemma is one of the most famous games in game theory. Thousands and thousands of papers have been published on versions of this game. In part, that's because it pops up everywhere.

Impalas living in between African woodlands and Savannahs are prone to catching ticks, which can lead to infectious diseases, paralysis, even death. So it's important for impalas to remove ticks and they do this by grooming, but they can't reach all the spots on their bodies and therefore they need another impala to groom them. Now, grooming someone else comes at a cost. It costs saliva, electrolytes, time and attention, all vital resources under the hot African sun where a predator could strike at any moment.

So for the other impala, it would be best not to pay this cost, but then again, it too will need help grooming. So all impalas face a choice: should they groom each other or not? In other words, should they cooperate or defect? Well, if they only interact once, then the rational solution is always to defect. That other impala is never gonna help you, so why bother? But the thing about a lot of problems is that they're not a single prisoner's dilemma.

Impalas see each other day after day and the same situation keeps happening over and over again. So that changes the problem because instead of playing the prisoner's dilemma just once, you're now playing it many, many times. And if I defect now, then my opponent will know that I'd defected and they can use that against me in the future. So what is the best strategy in this repeated game?

That is what Robert Axelrod, a political scientist wanted to find out. So in 1980 he decided to hold a computer tournament. He invited some of the world's leading game theorists for many different subjects to submit computer programs that would play each other. Axelrod called these programs strategies. Each strategy would face off against every other strategy and against a copy of itself and each matchup would go for 200 rounds. That's important and we'll come back to it.

Now, Axelrod used points instead of coins, but the payoffs were the same. The goal of the tournament was to win as many points as possible and in the end, the whole tournament was repeated five times over to ensure the success was robust and not just a fluke. Axelrod gave an example of a simple strategy. It would start each game by cooperating and only defect after its opponent had defected twice in a row.

In total Axelrod received 14 strategies and he added a 15th called random, which just randomly cooperates or defects 50% of the time. All strategies were loaded onto a single computer where they faced off against each other.

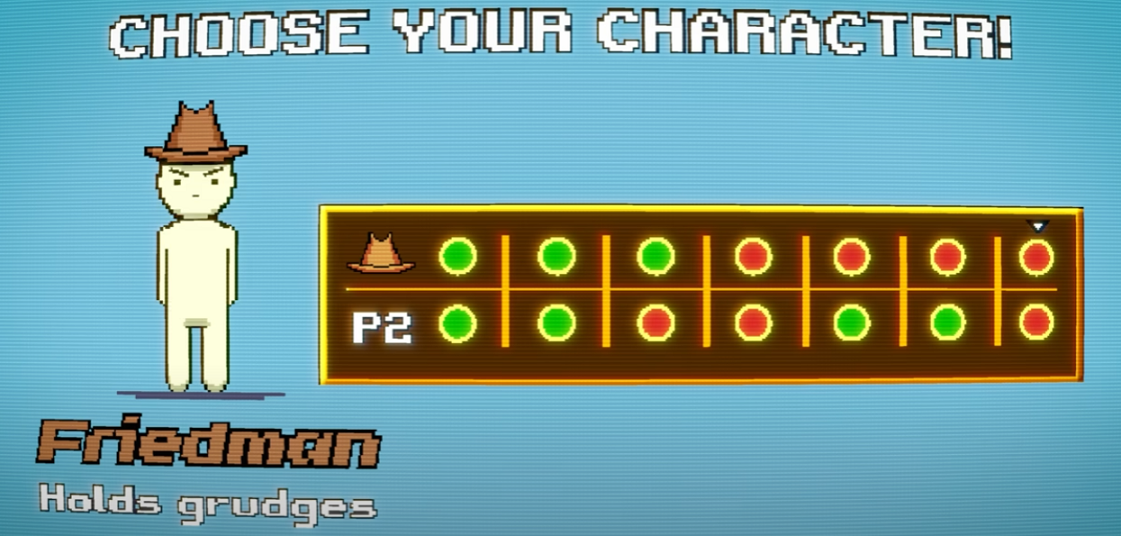

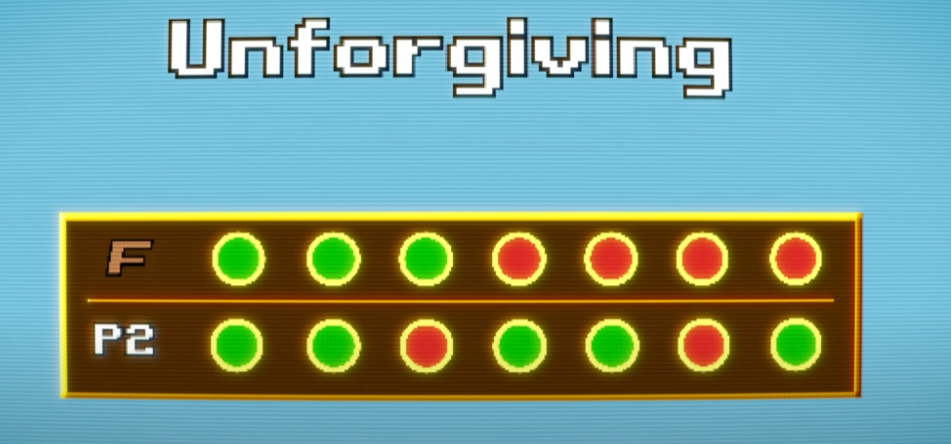

One of the strategies was called Friedman. It starts off by cooperating, but if its opponent defects just once, it will keep defecting for the remainder of the game.

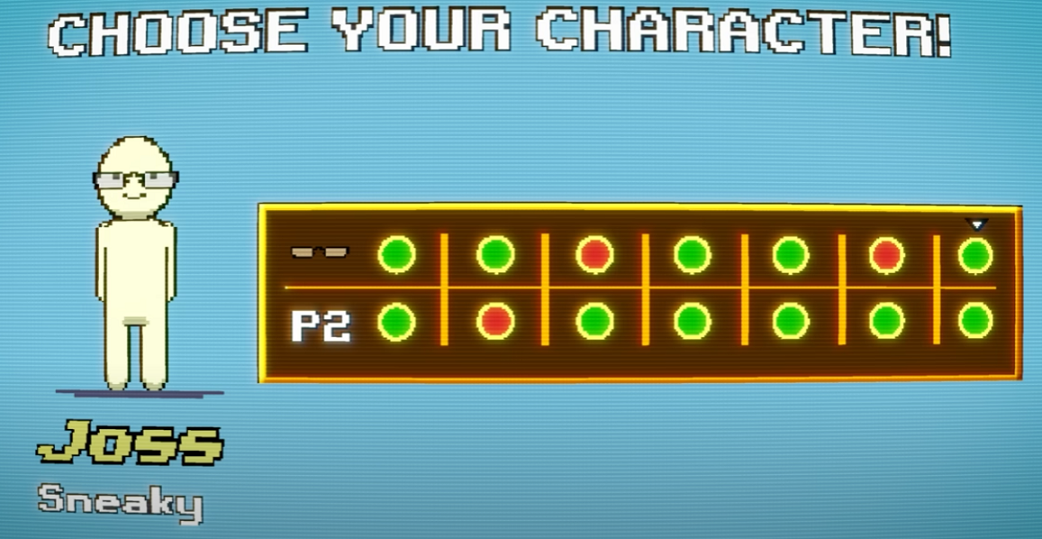

Another strategy was called Joss. It also starts by cooperating, but then it just copies what the other player did on the last move. Then around 10% of the time, Joss gets sneaky and defects.

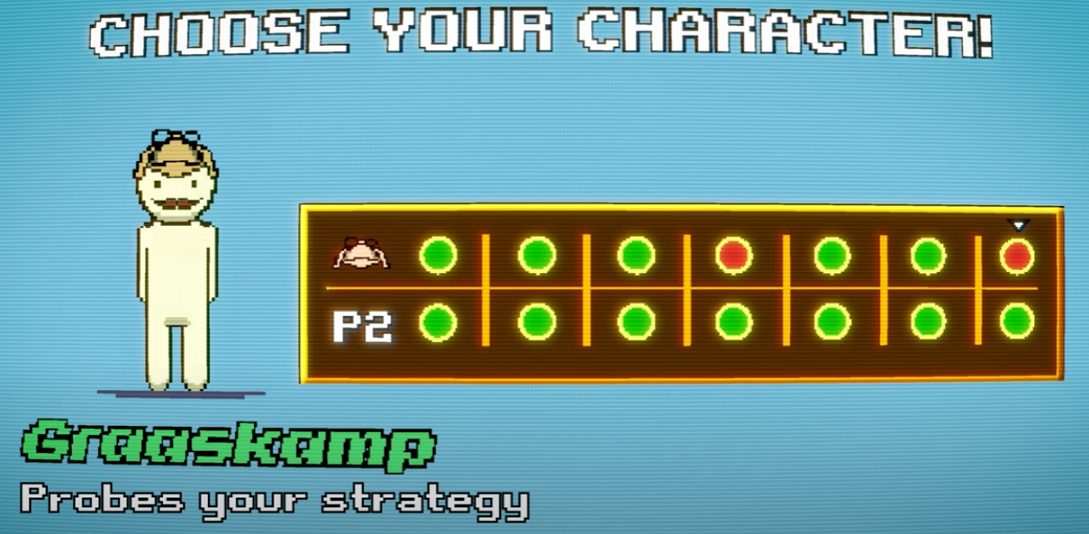

There was also a rather elaborate strategy called Graaskamp. This strategy works the same as Joss, but instead of defecting probabilistically, Graaskamp defects in the 50th round to try and probe the strategy of its opponent and see if it can take advantage of any weaknesses.

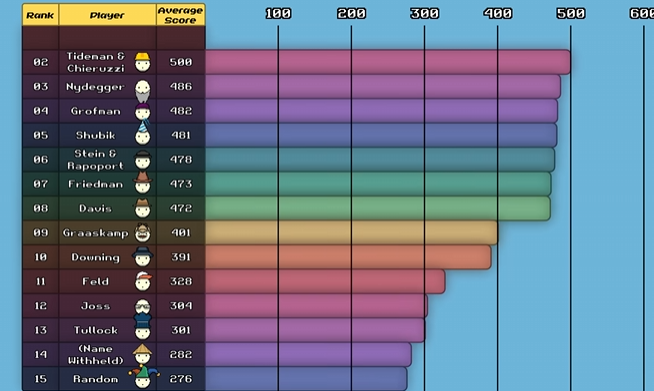

The most elaborate strategy was Name Withheld with 77 lines of code. After all the games were played, the results were tallied up and the leaderboard established. The crazy thing was that the simplest program ended up winning, a program that came to be called Tit for Tat.

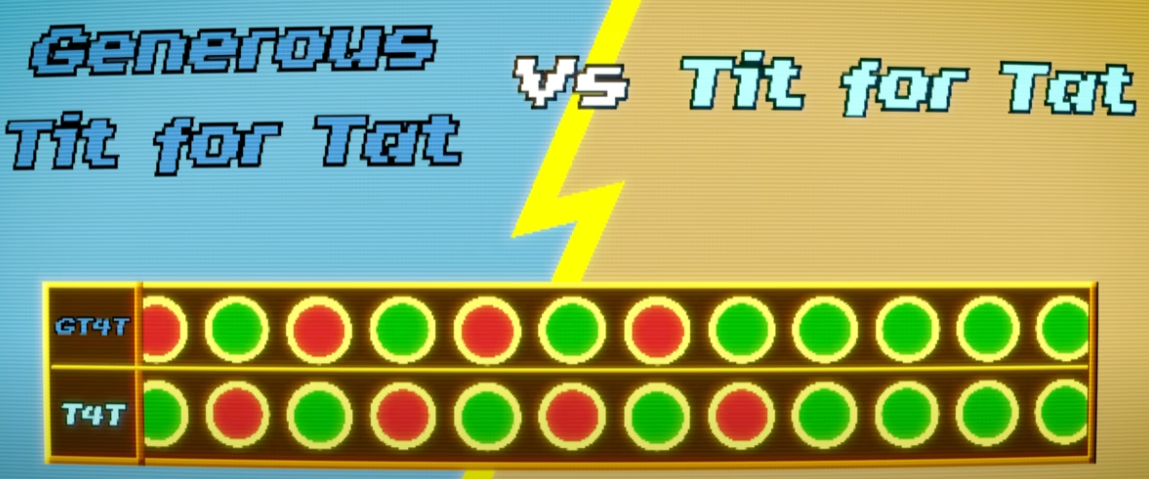

Tit for Tat starts by cooperating and then it copies exactly what its opponent did in the last move. So it would follow cooperation with cooperation and defection with defection, but only once if its opponent goes back to cooperating.

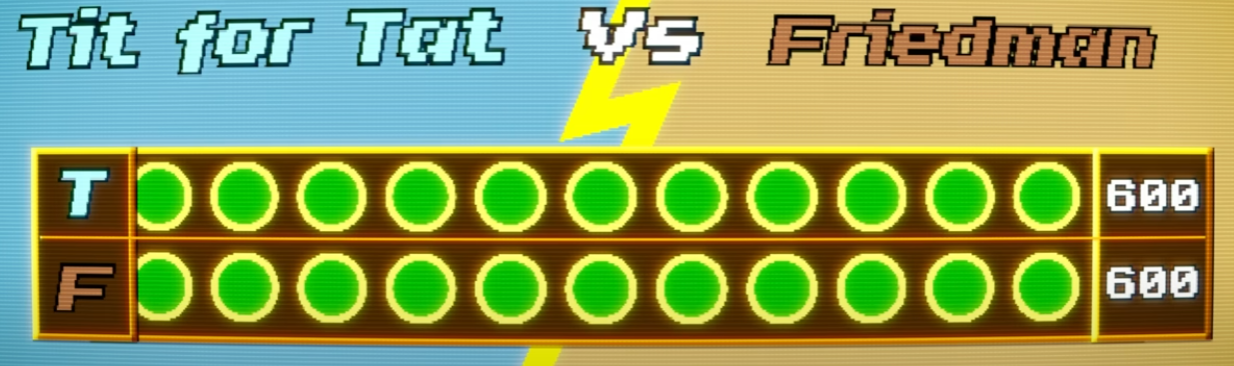

When Tit for Tat played against Friedman, both started by cooperating and they kept cooperating, both ending up with perfect scores for complete cooperation.

When Tit for Tat played against Joss, they too started by cooperating but then on the sixth move, Joss defected. This sparked a series of back and forth defections, a sort of echo effect. Okay, so now you've got this alternating thing which will remind you of some of the politics of the world today where we have to do something to you because of what you did to us.

And then when this weird program throws in a second unprovoked defection, now it's really bad because now both programs are gonna defect on each other for the rest of the game. And that's also like some of the things we're seeing in politics today and in international relations. As a result of these mutual retaliations, both Tit for Tat and Joss did poorly. But because Tit for Tat managed to cooperate with enough other strategies, it still won the tournament.

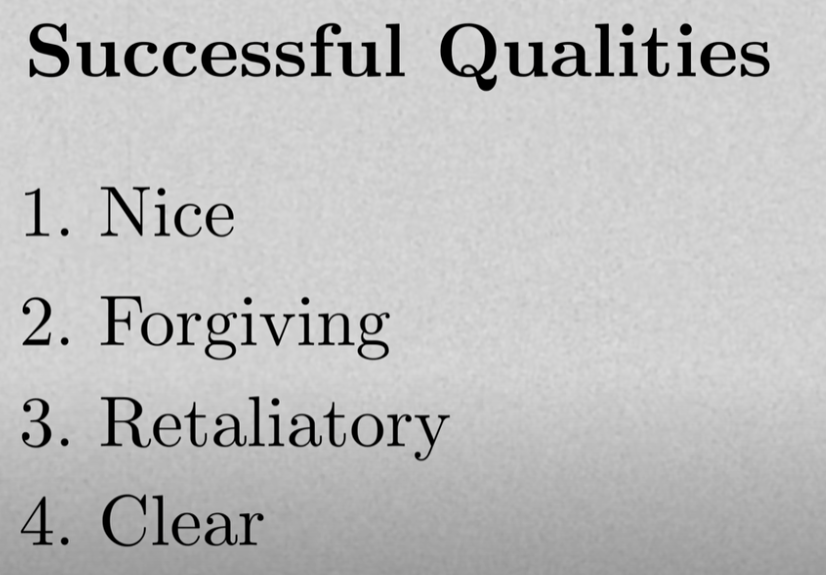

It was the simplest strategy that did the best. So I analyzed how that happened. Axelrod found that all the best-performing strategies, including Tit for Tat, shared four qualities. First, they were all nice, which just means they are not the first to defect. So Tit for Tat is a nice strategy; it can defect but only in retaliation. The opposite of nice is nasty. That's a strategy that defects first. So Joss is nasty.

Out of the 15 strategies in the tournament, eight were nice and seven nasty. The top eight strategies were all nice, and even the worst-performing nice strategy still far outscored the best-performing nasty one.

The second important quality was being forgiving. A forgiving strategy is one that can retaliate but doesn't hold a grudge. So Tit for Tat is a forgiving strategy. It retaliates when its opponent defects but doesn't let affections from before the last round influence its current decisions.

Friedman, on the other hand, is maximally unforgiving. After the first defection from the opponent, it would defect for the rest of the game. That might feel good to do, but it doesn't end up working out well in the long run. This conclusion that it pays to be nice and forgiving came as a shock to the experts. Many had tried to be tricky and create subtle nasty strategies to beat their opponent and eke out an advantage, but they all failed. Instead, in this tournament, nice guys finished first.

Now, Tit for Tat is quite forgiving, but it's possible to be even more forgiving. Axelrod's sample strategy only defects after its opponent defected twice in a row. It was called Tit for Two Tats. That might sound overly generous, but when Axelrod ran the numbers, he found that if anyone had submitted the Sample strategy, they would have won the tournament. After Axelrod published his analysis and circulated it among game theorists, he said, now that we all know what worked well, let's try again. So he announced a second tournament where everything would be the same except for one change: the number of rounds per game.

In the first tournament, each game lasted precisely 200 rounds. That is important because if you know when the last round is, then there's no reason to cooperate in that round. So you're better off defecting. Of course, your opponent should reason the same and also defect in the last round. But if you both anticipate defection in the last round, then there's no reason for you to cooperate in the second-to-last round, or the round before that, and so on, all the way to the very first round.

In Axelrod's tournament, it was a crucial factor that players didn't know exactly how long they would be playing. They knew, on average, it would be 200 rounds, but a random number generator prevented them from knowing with certainty. For this second tournament, Axelrod received 62 entries and again added randomness. The contestants had gotten the results and analysis from the first tournament and could use this information to their advantage.

This created two camps. Some thought that being nice and forgiving were clearly winning qualities, so they submitted nice and forgiving strategies. One even submitted Tit for Two Tats. The second camp anticipated that others would be nice and extra forgiving, so they submitted nasty strategies to try to take advantage of them.

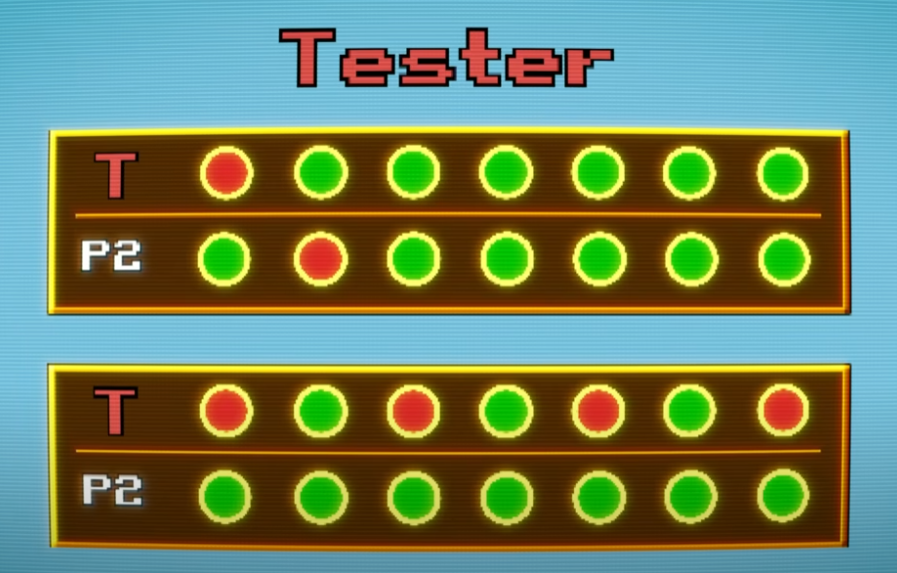

One such strategy was called Tester. It would defect on the first move to see how its opponent reacted. If it retaliated, Tester would apologize and play Tit for Tat for the remainder of the game. If it didn't retaliate, Tester would defect every other move after that. But again, being nasty didn't pay. Once again, Tit for Tat was the most effective. Nice strategies did much better, with only one of the top 15 not being nice. Similarly, in the bottom 15, only one was not nasty.

After the second tournament, Axelrod identified other qualities that distinguished the better-performing strategies. The third was being retaliatory, which means if your opponent defects, strike back immediately—don't be a pushover. Always Cooperate is a total pushover, and so it's very easy to take advantage of. Tit for Tat, on the other hand, is very hard to take advantage of.

The last quality Axelrod identified was being clear. Programs that were too opaque or too similar to a random program were difficult to figure out. Since opponents couldn't establish any pattern of trust with them, they often defaulted to defecting. What’s mind-blowing is that these four principles—being nice, forgiving, provokable, and clear—are a lot like the morality that has evolved worldwide and is often summarized as an eye for an eye. It's not the Christian philosophy of turning the other cheek but an older philosophy.

Interestingly, while Tit for Two Tats would have won the first tournament, it only came 24th in the second tournament. This highlights an important fact: in the repeated Prisoner's Dilemma, there is no single best strategy. The best-performing strategy always depends on the other strategies it interacts with. For example, if you put Tit for Tat in an environment with only the ultimate bullies who always defect, then Tit for Tat comes in last. Axelrod wanted to see whether Tit for Tat did well because it took advantage of weak strategies. So he ran a simulation where successful strategies would increase in number and unsuccessful ones would diminish.

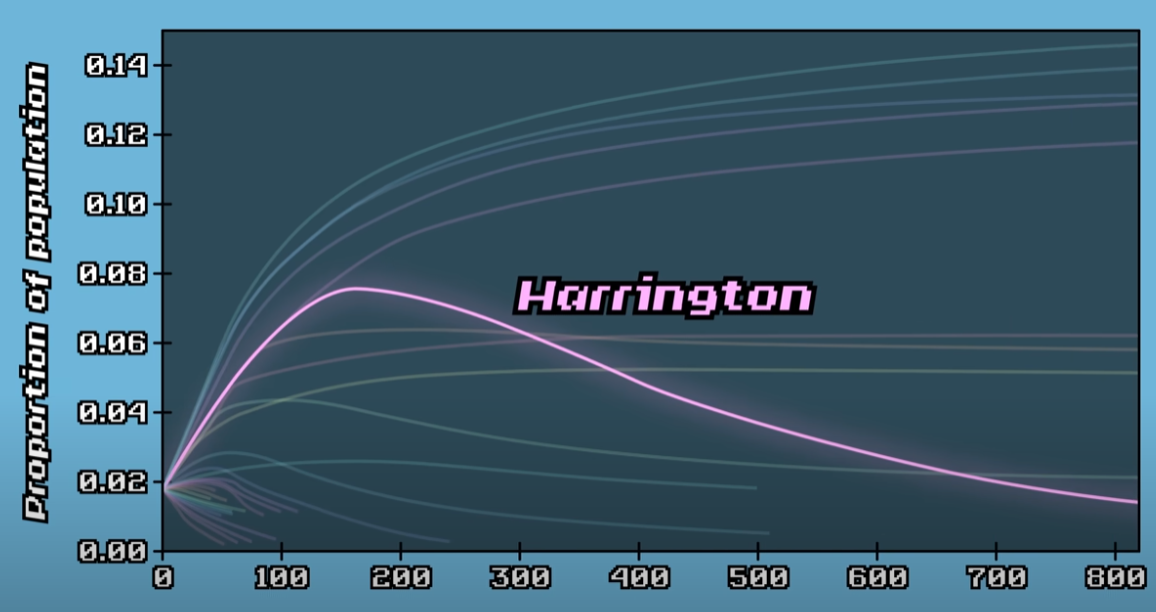

In this simulation, the worst-performing strategies quickly shrank and went extinct, while the top-performing strategies became more common. Harrington, the only nasty strategy in the top 15, first grew quickly, but as the strategies it preyed on disappeared, Harrington's numbers also dropped. This simulation tested how well a strategy performed against other successful strategies. After a thousand generations, the proportions stabilized, and only nice strategies survived. Again, Tit for Tat came out on top, representing 14.5% of the total population. This process resembles evolution, though with a subtle difference—there were no mutations, making it more of an ecological simulation.

But what if the world started differently? Imagine a hostile environment populated mainly by defectors, except for a small cluster of Tit for Tat players who frequently interacted with each other. Over time, they would build up points, leading to more offspring, and eventually take over the population. Axelrod showed that a small island of cooperation can emerge, spread, and take over the world. Cooperation can emerge even in a population of self-interested individuals who are not trying to be good-hearted. You don't have to be altruistic—just looking out for yourself can still lead to cooperation.

Some argue that this could explain how we moved from a world of completely selfish organisms, where every being only cared for itself, to one where cooperation emerged and flourished. From impalas grooming each other to fish cleaning sharks, many life forms experience conflicts similar to the Prisoner's Dilemma. But because they don't interact just once, they can both be better off by cooperating. This doesn't require trust or conscious thought; the strategy can be encoded in DNA. As long as it performs better than other strategies, it can take over a population.

Axelrod's insights were applied to areas like evolutionary biology and international conflicts. However, his original tournaments didn't account for one factor—random errors in the game. What happens if there's some noise in the system? For example, one player tries to cooperate, but it comes across as a defection. Small errors like these happen all the time in the real world. A striking example is from 1983 when the Soviet early warning system falsely detected the launch of an intercontinental ballistic missile from the US, even though the US had launched nothing.

The Soviet system once mistook sunlight reflecting off high-altitude clouds for a ballistic missile. Thankfully, Stanislav Petrov, the Soviet officer on duty, dismissed the alarm. This example highlights the potential consequences of signal errors and the importance of studying the effects of noise on strategic decision-making. The term "game theory" might sound trivial, as if it belongs to a children's game, but in reality, it deals with life-and-death matters. During the Cold War, these strategic interactions could have led to global annihilation, proving that these "games" were anything but trivial.

When Tit for Tat plays against itself in a noisy environment, both strategies start by cooperating. However, if a single cooperation is mistakenly perceived as a defection, the other Tit for Tat retaliates, setting off a chain of alternating retaliations. If further cooperation is misinterpreted as defection, the game devolves into constant mutual defection. Over time, both players earn only a third of the points they would in a perfect environment. This shows that while Tit for Tat initially performs well, it can fail under noisy conditions. To fix this, a reliable way to break these retaliation loops is needed.

One effective solution is adding a small degree of forgiveness. Instead of retaliating after every defection, a modified Tit for Tat retaliates only about nine out of ten times. This prevents endless retaliation while maintaining enough deterrence to avoid exploitation. In experiments where noise and generosity were introduced, this slightly more forgiving approach performed significantly better. Tit for Tat is effective, but it can never outperform its opponent—it can only draw or lose. Despite this, when all interactions are tallied, it often emerges as the top strategy.

By contrast, the "always defect" strategy never loses an individual game—it can only draw or win. However, in the long run, it performs poorly. This challenges a common misconception about winning. Many assume that to win, they must beat the opponent. While this is true in zero-sum games like chess or poker, real life is rarely zero-sum. Success doesn't always come from taking away another's resources but from finding win-win situations. The "banker" in real life is the world itself, and cooperation can unlock rewards for all involved.

The Cold War offers a powerful example of this principle. From 1950 to 1986, the U.S. and Soviet Union struggled to cooperate, leading to an arms race. However, in the late 1980s, they began gradually reducing their nuclear stockpiles. Instead of agreeing to eliminate all nuclear weapons at once—turning disarmament into a single prisoner's dilemma—they disarmed incrementally. Each year, a small number of nukes were removed, with both sides verifying compliance before proceeding. This approach, based on iterative cooperation, helped resolve conflict and de-escalate tensions.

Since Axelrod's tournaments over 40 years ago, researchers have continued studying optimal strategies in various environments. They have explored different payoffs, errors, and even evolutionary adaptations. While Tit for Tat and its generous variant do not always win, Axelrod's main lessons remain true: be nice, be forgiving, but don't be a pushover. Anatol Rapoport, who submitted Tit for Tat to the tournament, initially had reservations, as he leaned toward even greater forgiveness. Nonetheless, his strategy proved to be one of the most effective in fostering cooperation.

One of the defining aspects of life is the ability to make choices—choices that not only shape our future but also impact those around us. In the short term, the environment determines who succeeds, but in the long run, individuals and societies shape their environment. The game of life is ultimately about making wise decisions, as their effects can extend far beyond what we immediately perceive.